Maybe that app could help people use pull-requests during editorial reviews, much like how tests help developers with code reviews.

It lead to a rather simple question:

What do I have to do to get the following to work?

OK so that’s what I want to do, I researched and built the pieces but when it came time to actually building the entire node app, I stopped short.

That makes this is a half-baked idea: I’ve verified the individual pieces of tech work, but am stopping because I don’t want to actually build an entire product.

What tech would I need to build in order to do is? Here are the broad strokes:

0. Write-Good: the npm module to test our READMEs

There’s a rather clever npm module write-good which you can run as a CLI. Example from their docs:

1 2 3 4 5 6 7 | |

I figure we can use this to test a repo’s README.

1. GitHub API: AccessToken with repo:status access

We’ll need to get an AccessToken since each of our GitHub API calls will require it. We can either:

- Create a personal access token

- Use oAuth to sign in. If you were building out an application, this is what you’d need to do.

For personal access tokens, you can follow instructions here.

For oAuth signin, you can duck-duck-go for “Sign in With GitHub”. In Rails, omniauth is the dominant way forward. In Node/Express land, I found the options less hospitable, but the npm package passport-github2 is very well done.

2. GitHub GraphQL: list of repos to activate

You’ll want your app to get notified when a pull-request is created or updated so you can run your “test suite”.

I imagine that after your user signs in, you’ll present them with a list of their repos. How do you get their repository list? I used the GraphQL endpoint.

1 2 3 4 | |

3. GitHub API: WebHook: know when a pull-request happens

They’d switch a repo on, at which point you would post to GitHub’s WebHook API and register a webhook.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 | |

A fun hack here is to actually post to viasocket.com. It stores and lets you inspect, change, and replay webhooks, when you’re trying to diagnose how to handle a webhook, there’s not much more frustrating than having to recreate entire scenarios to test the typo you just fixed.

Second fun hack is to use https://webhookrelay.com/ or https://ngrok.com to get webhooks to hit your local development system. You’d definitely want to do that here.

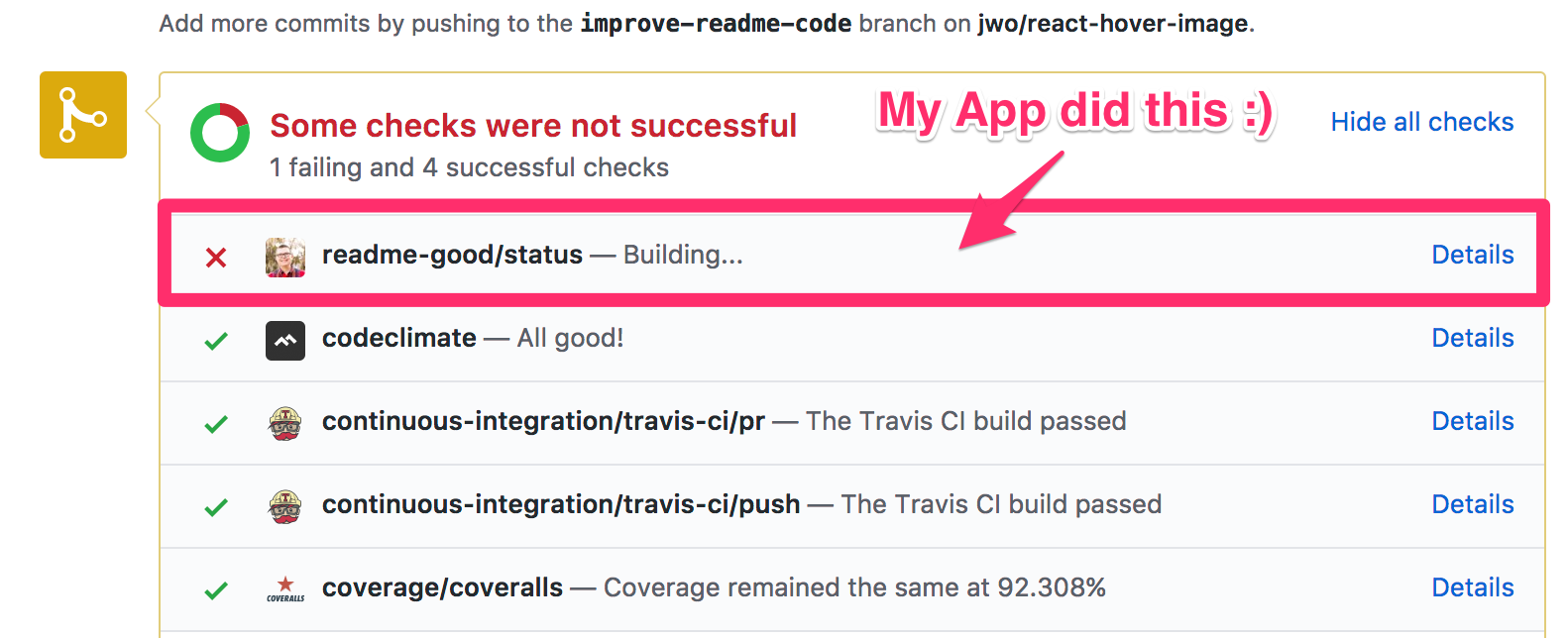

4. GitHub API: Status Update: a build has started

OK, so there’s a new pull-request on a repo which your app is watching, and GitHub has told you about it.

We’ll want to notify GitHub that you’re starting a build using the GitHub API (REST v3). That’s what will add our app to the list of builds. GitHub isn’t waiting for us, we tell it when we start a build – this changes the status to “pending”.

1 2 3 4 5 6 7 8 9 10 11 | |

5. GitHub API: GraphQL: git commit

We’re going to need to clone the repository at the pull-request level to run tests. Which SHA should we use after we clone? We’ll ask GraphQL for what we need.

Note: we’ll know from the webhook which pull request number is in question.

4. Docker: start build

This is a little hand-wavey… But imagine we have a server with docker installed. We can build an image that has our write-good npm package installed. We can then run a docker container and:

- clone our repo

- checkout to the correct SHA or ref

- run our tests

We then capture our build status and store it against our BuildID.

5. GitHub API: Status Update: build is complete

Notify GitHub that the build is over and either passed or failed.

1 2 3 4 5 6 7 8 9 10 11 | |

What didn’t I think of?

I’m sure a ton. After all, I just built the pieces of tech, and didn’t tie them together at all. But it sure was fun!

All of the code I used ended up being stored in Gists, and generally as a Git Repo.

Documentation for endpoints

The GitHub API Endpoints we end up using:

PS: you should absolutely use the GitHub GraphQL API Explorer to explore the GraphQL. It’s awesome once you get one specific thing to work and then you can edit and try other things.

]]>